Ultrasound Intraoral Imaging

Clinical Use

Clinical research summary and references [- July 2018] (Compiled by J Cleland & J Isles, Strathclyde University, UK) (pdf)

Ultrasound metrics used in studies of disordered speech. [Feb 2020] (Compiled by Joanne Cleland, University of Strathclyde) (pdf)

Ultrax Project

An investigation of the efficacy of ultrasound as a feedback tool for diagnosis and treatment of speech disorders funded by the UK Engineering and Physical Sciences Research Council 2011-2014. Work is being carried out by Articulate Instruments Ltd in co-operation with CSTR, University of Edinburgh and CASL, Queen Margaret University. The project has a further aim of enhancing the ultrasound image of the tongue in order to provide clearer feedback and assessment.

British Columbia

Ultrasound has been used by speech language pathologists in school districts in British Columbia for about 10 years as a visual feedback tool for assessment and therapy. An introduction to how ultrasound is used in BC can be found on the UBC school of audiology and speech sciences website

UltraPhonix

UltraPhonix project 2015-2016 aims to evaluate the effectiveness of Ultrasound Visual Biofeedback (U-VBF) therapy in treating Speech Sound Disorders (SSDs) which have been unresponsive to traditional speech therapy methods. The project is run jointly by Queen Margaret University and Strathclyde University.

Ultrasound Biofeedback for Therapy-Resistant Speech Sound Disorders in Children

The project at Syracuse University 2013-2016 will refine ultrasound biofeedback based intervention procedures, determine if different profiles of children with speech sound disorders respond to the intervention, and compare the outcomes of this approach to outcomes achieved with traditional speech therapy.

Multicenter NIH Speech Remediation Project

NIDCD multisite (CUNY, University of Cincinnati, Haskins, New York University and University of Syracuse) translational grant 2014-2019 focuses on direct applications in the clinic—the use of ultrasound for assessment and visual biofeedback for children who misarticulate /r/.

University of Sydney

Planning to investigate and develop treatments for developmental speech disorders, apraxia, cleft palate, head and neck cancer, hearing impairment, swallowing and dysarthria using ultrasound biofeedback.

University of Strathclyde

Project 01 Jan 2017-31 Mar 2018; Visualising Speech: Using Ultrasound Visual Biofeedback to Diagnose and Treat Speech Disorders in Children with Cleft Lip and Palate

Phonetics

Using ultrasound for Speech research

With ultrasound, it is easy to get a moving image representing the tongue but it is important to understand the way ultrasound works in order to get the best and most accurate image. Ultrasound tutorial (draft in progess)

Seeing Speech teaching resource

The Seeing Speech phonetics teaching web resource is now available

This resource provides teachers and students of Practical Phonetics with synchronised ultrasound video, audio and 2D/3D diagrams of modelled speech and spontaneous speech (drawn from collected Ultrasound Tongue Images and MRI corpora).

Dynamic Dialects Project

Dynamic Dialects is the product of a collaboration between researchers at the University of Glasgow, Queen Margaret University Edinburgh, University College London and Napier University, Edinburgh. Dynamic Dialects is an accent database, containing an articulatory video-based corpus of speech samples from world-wide accents of English. Videos in this corpus contain synchronised audio, ultrasound-tongue-imaging video and video of the moving lips. We are continuing to augment this resource.

Echo B

Which ultrasound system do I need? Frequently Asked Questions (EchoB Micro FAQ.pdf )

The EchoB is a compact yet accurate black and white system well suited to speech research.

No longer available

Key Features:

- Portable: 62 x 210 x 165mm ; 1.6kg

- Hardware sync signal: TTL pulse on completion of every frame allowing fully automated, accurate audio sync.

- Speech capture software: Integrated with AAA capture/analysis software

- Raw scan data capture: Saves disk space and allows more accurate analysis. No torn images.

- Microconvex probe 5-8MHz probe recommended for imaging children and small to medium sized adults

- High frame rate: Frame rate up to 120Hz (more typically 87Hz for 70mm depth; 70% FOV)

- Low Cost

- No fan noise There is no cooling fan so microphone can be near machine.

- Low frequency probe option 2-4MHz convex probe for better penetration when imaging larger adults

- Good image quality 128 echo beams per full scan mean Image detail ccompares well with top end systems.

Radius 10mm; Frequency 5-8MHz; FOV 156°

Radius 10mm; Frequency 5-8MHz; FOV 156°

Radius 20mm; Frequency 2-4MHz; FOV 104°

Radius 20mm; Frequency 2-4MHz; FOV 104°

Contact us for details on pricing and performance

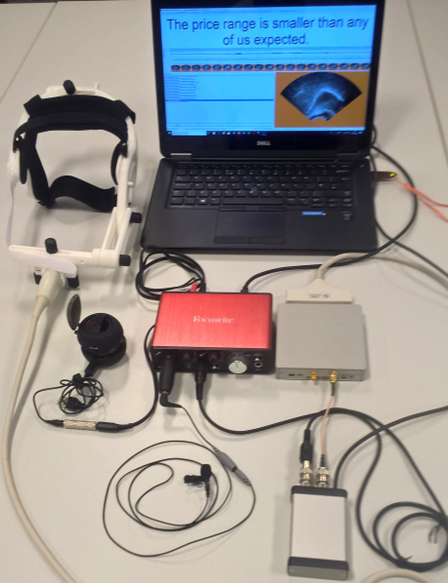

Micro/SonoSpeech

Overview

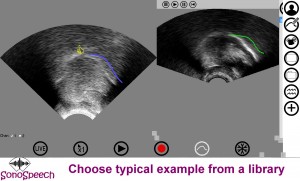

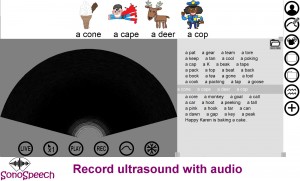

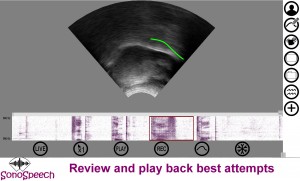

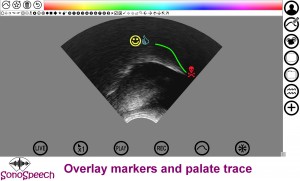

This is the latest ultrasound technology superceding the EchoB and can be used for research or as a visual biofeedback tool. Both configuratrations are based on the same pocket sized hardware. The Micro system has an additional frame synchronisation output which, when used in conjunction with the AAA software and an audio bundle, automatically and precisely aligns the ultrasound frames with the audio. Sonospeech is a simplified version of the Micro designed specifically for clinical intervention. Cost and cabling is minimised and the SonoSpeech software provides an uncluttered intuitive interface for visual biofeedback.

Both systems can operate at image frame rates of up to 140 fps although more typically are used in the range 80-120Hz. Like the EchoB system, the data is stored in a raw envelope detected beam format. This saves space and allows a range of image processing to be done after or during recording to enhance the display without altering the data upon which that image is based. This offers Articulate instruments ( and anyone else who wishes) the opportunity to develop image enhancement in the future which can be applied retrospectively to historic recordings.

Micro

Micro research bundle (Brochure pdf)

SonoSpeech

Sonospeech bundle (brochure pdf)

Art

Art

Key Features:

- Portable: 136 x 189 x 28mm ; 0.77kg (half the size and weight of EchoB)

- Hardware sync signal: TTL pulse on completion of every frame allowing fully automated, accurate audio sync.

- Speech capture software: Integrated with AAA capture/analysis software

- Raw scan data capture: Saves disk space and allows more accurate analysis. No torn images.

- Convex probe 1-5MHz, 50mm radius probe recommended for imaging adults (microconvex only available in endocavity form)

- High frame rate: Frame rate up to 200Hz (more typically 87Hz for 70mm depth; 70% FOV)

- Medium cost

- No fan noise There is no cooling fan so microphone can be near machine.

- Parallel scanning faster frame rates without compromising image quality

- Good image quality 192 echo beams per full scan mean Image detail compares well with top end systems.

Other Systems

We offer complete systems for recording and analysing ultrasound image sequences based on the cost effective scanners built by Mindray. These scanners are portable and provide an NTSC video output which can be de-interlaced to provide ~60 frames per second. Although some frames may have discontinuities, these models provide the clearest image sequences we have found for video-based ultrasound. See Ultrasonix for superior but significantly more expensive cineloop data transfer.

http://www.ultrasonix.com/research/clinical/linguistic

Sonix Tablet

Sonix Tablet

Choosing a system

There are many manufacturers of diagnostic ultrasound machines and each manufacturer has several models. New models are being brought out all the time so it is worth looking around. The three key elements you need from your system are: basic black and white B-mode scanning; a means to get good clean image sequence from the system and synchronise it with audio; suitable transducers.All you need is B&W B-mode

You will find a Black&White B-mode option on all ultrasound machines. More expensive models will provide colour doppler and 4D imaging, neither of which have been found to be useful for speech research.Image quality

More expensive machines do not necessarily provide better image quality. Ultrasound reps are often not familiar with the requirements for clear distinct dynamic images. The only way to be sure is to record the image stream before you buy and examine the clarity of each image. In particular you should be aware that you need to switch off any settings that despeckle by averaging frames as this causes blurring of images where the tongue is moving. Be aware also of despeckling/smoothing that operates by sening several pulses per scanline as this will reduce the internal frame rate of the ultrasound system. In general you want to achieve internal frame rates of 60Hz and above to avoid discontinuities in individual images or duplicate frames in the image sequence.Accessories

Ultrasound probe can be handheld under the chin but for more stable imaging a headset or other stabilisation method can be used.

The UltraFit Headset replaces the aluminium headset described below. Like its predecessor, it is fully adjustable and has been used with adults and children as young as five years. The headset is light, more comfortable after prolonged use and more quickly and easily adjusted to fit. It maintains the probe in the midsagittal plane and restricts rotational and translational movement within the midsagittal plane. The amount of probe movement relative to the head is determined by a number of factors including how firmly the headset is fitted and how much force is applied to the transducer by jaw opening during speech and swallowing.

Weight: 0.3kg (excluding probe weight)

brochure pdf

The UltraFit Headset replaces the aluminium headset described below. Like its predecessor, it is fully adjustable and has been used with adults and children as young as five years. The headset is light, more comfortable after prolonged use and more quickly and easily adjusted to fit. It maintains the probe in the midsagittal plane and restricts rotational and translational movement within the midsagittal plane. The amount of probe movement relative to the head is determined by a number of factors including how firmly the headset is fitted and how much force is applied to the transducer by jaw opening during speech and swallowing.

Weight: 0.3kg (excluding probe weight)

brochure pdf

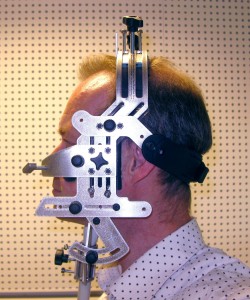

The Aluminium Probe Stabilisation Headset is designed for ultrasound tongue imaging research. It is fully adjustable and has been used with adults and children as young as six years. The outer shell is 3mm aluminium, while the inner is made from polycarbonate with gel and neoprene pads. The headset maintains the probe in the midsagittal plane and restricts rotational and translational movement within the midsagittal plane. The amount of probe movement relative to the head is determined by a number of factors including how firmly the headset is fitted and how much force is applied to the transducer by jaw opening during speech and swallowing.

Weight: 0.8kg (excluding probe weight)

The Aluminium Probe Stabilisation Headset is designed for ultrasound tongue imaging research. It is fully adjustable and has been used with adults and children as young as six years. The outer shell is 3mm aluminium, while the inner is made from polycarbonate with gel and neoprene pads. The headset maintains the probe in the midsagittal plane and restricts rotational and translational movement within the midsagittal plane. The amount of probe movement relative to the head is determined by a number of factors including how firmly the headset is fitted and how much force is applied to the transducer by jaw opening during speech and swallowing.

Weight: 0.8kg (excluding probe weight)

R&D

Real-time contour tracking and vocal tract estimation

New real time live tongue tracking – work in progress. [Will also be available to users of EchoB and an offline version for users of Ultrasonix]. The following is an example of the tracker on pre- and post therapy speech. The tracker is fully automatic speaker independent requiring no seeding or training. Although it mistracks occasionally it recovers automatically. We are working to eliminate the mistracking and add further features.

Pre-therapy client* presenting with palatalised lateralised /r/. Video shows A) plain ultrasound image (1/2 speed) B) plain ultrasound with live edge tracking (1/2 speed) C) Edge tracking carving out vocal tract (1/4 speed) D) Edge tracking within vocal tract.

Post-therapy .

This 02SSD Crab pdf shows difference between pre- and post- /r/ in the word “crab”, highlighting the significance not only of shape but also context of the location of the constrictions formed withing the vocal tract.

[* Client recorded as part of the Ultrax Project funded by EPSRC EP/I027696/1 ]